Software Engineering Skills That AI Cannot Replace

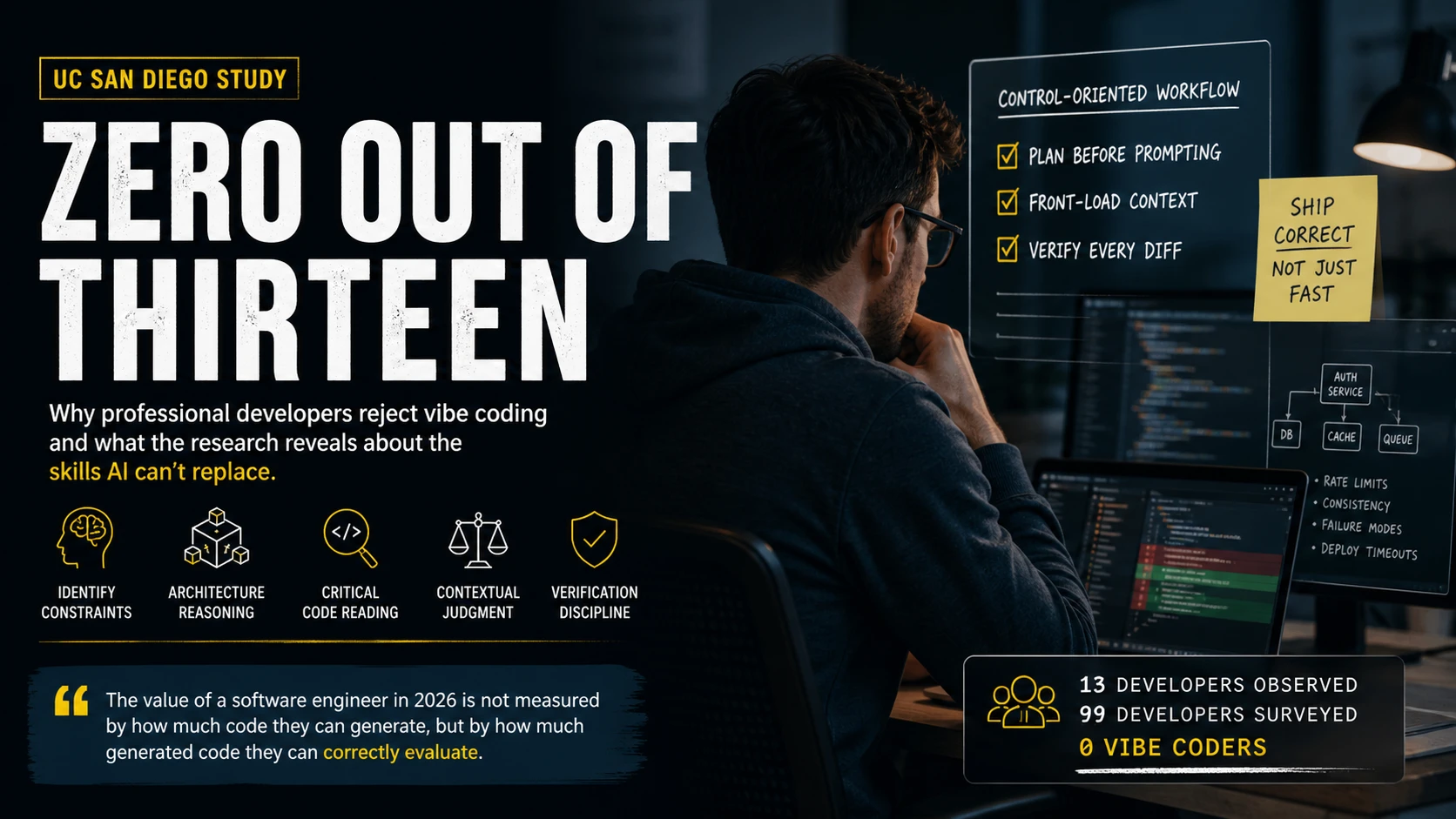

Zero Out of Thirteen

A UC San Diego research team did something most AI discourse skips entirely. They watched professional developers work. Not in a lab. Not with a benchmark. In their actual production environments, writing actual code that ships to actual users.

They observed 13 experienced developers coding with AI agents in the wild and surveyed 99 more. The headline finding makes the influencer vibe-coding demos look absurd: not a single professional developer in the study practiced vibe coding. Zero. Out of thirteen observed and ninety-nine surveyed.

This is not a survey of luddites. These are developers who use AI tools every day. They use Copilot, Cursor, Claude Code. They are not rejecting the tools. They are rejecting a specific relationship with the tools. The one where you give up control and trust the output because it looks right.

The study, published by Huang et al. in late 2025 and now going viral with over 172,000 views on X, provides the first empirical evidence of something many senior engineers have felt intuitively: the software engineering skills that AI cannot replace are exactly the ones that vibe coding atrophies.

What the Control-Oriented Workflow Looks Like

The researchers identified a specific pattern among productive AI-assisted developers. They called it a “control-oriented workflow.” That sounds academic, but what it describes is concrete.

These developers do three things that vibe coders do not:

They plan before prompting. They do not open their AI tool and start typing “build me a user authentication system.” They think about the problem first. They identify constraints. They decide what the implementation should look like before they ask AI to generate it. The planning is the skill. The prompting is just communication.

They front-load context. Experienced developers spend time telling the AI about their codebase, their architecture, their team’s conventions, and their constraints. They treat the AI like a junior developer who needs a thorough brief, not like a senior engineer who understands the system. This works because it is accurate. AI does not understand your system. It predicts tokens that look like they belong in your system.

They verify every diff. This is the one that separates the study’s professionals from the vibe coding crowd most sharply. Every developer in the study read the AI-generated code line by line before merging. They refused to accept code they had not personally understood. Not skimmed. Understood.

This is expensive. It is slow compared to accepting suggestions and moving on. But the developers in the study were not optimizing for speed of generation. They were optimizing for speed of correct shipping. And those are very different metrics.

Why Vibe Coding Breaks in Production

Andrej Karpathy popularized the term “vibe coding” to describe a flow-state relationship with AI where you trust the output, accept suggestions quickly, and ride the momentum. For throwaway prototypes and personal experiments, this can work beautifully. The developers in the UC San Diego study said exactly that: vibe coding is fine for things that do not ship.

But production code operates under constraints that vibe coding cannot see. Your API has rate limits your AI tool does not know about. Your database has consistency requirements that emerge from business rules discussed in meetings no AI attended. Your deployment pipeline has a 30-second health check timeout that means certain initialization patterns will silently fail in staging but work perfectly on localhost.

These are not edge cases. They are the normal operating conditions of production software. And they are invisible to a model that predicts code from patterns in training data.

The study found that experienced developers understood this intuitively. They did not need someone to explain why vibe coding is risky. They had seen code that passes tests but fails in production. They had debugged the output of a confidence engine that generated plausible solutions without actual understanding.

This connects to a broader pattern in the vibe coding versus agentic coding discussion. The difference is not about which tools you use. It is about whether you maintain the engineering judgment to evaluate what those tools produce.

The Skill Inversion Effect

Here is the counterintuitive finding buried in this research: AI coding tools make engineering skills more important, not less. The study data implies something I call the skill inversion effect.

In the pre-AI era, the skill hierarchy was straightforward. Better developers wrote better code, faster. The gap between a mid-level and senior developer showed up in velocity and quality of output.

In the AI era, the hierarchy inverts. Every developer can generate code at approximately the same speed. The AI equalizes generation velocity. What it does not equalize is evaluation quality.

A senior developer using Claude Code who spots a subtle concurrency issue in the generated output saves their team a production incident worth 4 hours of debugging plus the customer impact. A mid-level developer who accepts the same code because it looks correct introduces that incident.

I tracked this pattern across teams I have worked with. In a team of 8 developers using AI tools for 6 months, the 3 developers with the strongest code review backgrounds caught an average of 2.3 significant AI-generated issues per week that would have reached production otherwise. The 5 developers who relied more heavily on AI without deep review habits caught 0.4 issues per week. Same tools. Same codebase. Different skills brought to the interaction.

The gap is not about AI literacy. Everyone on that team knew how to use the tools. The gap is about software engineering skills that predate AI: reading code critically, understanding system behavior under load, recognizing design patterns that create maintenance debt 6 months later.

flowchart TD

classDef start fill:#1C1816,stroke:#5A5550,stroke-width:1px,color:#A9A299

classDef bad fill:#221410,stroke:#C06040,stroke-width:1.5px,color:#E09070

classDef badEnd fill:#351812,stroke:#D97656,stroke-width:2.5px,color:#D97656,font-weight:bold

classDef good fill:#101E14,stroke:#5A8A58,stroke-width:1.5px,color:#8AB888

classDef goodEnd fill:#0D2812,stroke:#7A9B76,stroke-width:2.5px,color:#7A9B76,font-weight:bold

classDef pivot fill:#1A1610,stroke:#E5A649,stroke-width:2px,color:#E5A649

A["AI generates code"]:::start --> B{"Developer evaluates"}:::pivot

B -->|"Strong review skills"| C["Catches subtle issues"]:::good --> D["Reliable code"]:::goodEnd

B -->|"Weak review skills"| E["Accepts plausible output"]:::bad --> F["Latent defects"]:::badEnd

linkStyle 0 stroke:#5A5550

linkStyle 1 stroke:#5A8A58

linkStyle 2 stroke:#7A9B76

linkStyle 3 stroke:#C06040

linkStyle 4 stroke:#D97656

This is the inversion: the developers who benefit most from AI tools are the ones who needed them least. Their pre-existing judgment lets them use AI as an accelerator rather than a crutch. The developers who most want AI to replace their thinking are the ones most harmed when it does.

The Five Skills the Study Shows Matter Most

Based on the UC San Diego findings and connecting them to what I have observed in practice, here are the software engineering skills that AI cannot replace and that the study’s most productive developers demonstrated:

1. Constraint identification. The ability to list what the code must satisfy before writing it. Not just requirements. Constraints. Performance budgets, consistency guarantees, failure modes, resource limits. AI does not know these unless you tell it, and you cannot tell it what you do not know yourself.

2. Architectural reasoning. Understanding how a change fits into the larger system. The study’s developers did not evaluate code in isolation. They evaluated it in context: how does this interact with the existing authentication flow? What happens under concurrent access? Does this create a coupling that makes the next feature harder?

3. Critical code reading. The skill of reading code and asking “what could go wrong here?” rather than “does this look right?” These are fundamentally different questions. The first requires imagining failure scenarios. The second requires only pattern matching, which is exactly what AI already does.

4. Context-dependent judgment. Knowing that a technically correct solution is wrong for your specific situation. A caching layer that reduces latency but violates your data freshness SLA. A database index that speeds up reads but tanks write throughput during your daily batch import. AI generates technically valid code. Your job is knowing when technically valid is not actually valid.

5. Verification discipline. The habit of reading every line. Not some lines. Not the parts that look suspicious. Every line. This sounds tedious, and it is. It is also what separated every single developer in the study from the vibe coding approach they all rejected.

What This Means for Your Career Right Now

The UC San Diego study validates what the market is already signaling through other data points. Google announced that 75% of new code inside Google is AI-generated, reviewed by humans. Sierra AI redesigned their engineering interviews to test evaluation ability rather than generation ability. The best engineers are writing code by hand for critical logic specifically to maintain the judgment that AI threatens to atrophy.

If you are a mid-level developer wondering which skills to invest in, the answer from this research is unambiguous: invest in the skills that let you evaluate AI output critically. That means:

Practice code review as a skill, not a chore. Read other people’s code actively. Ask yourself what you would change and why before looking at existing review comments. Build the habit of evaluation that the study’s developers demonstrated.

Learn to reason about systems, not just functions. A function can be correct in isolation and wrong in context. The ability to hold the larger system in your head while evaluating a local change is the skill that takes years to build and minutes to apply.

Write critical code by hand regularly. Not because AI cannot write it. Because the act of struggling with a problem builds the pattern recognition that lets you spot when AI gets it wrong. The study’s developers maintained that muscle memory deliberately.

Understand your production environment deeply. Know the deployment pipeline. Know the failure modes. Know the constraints that no AI model was trained on. This contextual knowledge is what makes your review of AI-generated code valuable rather than ceremonial.

The Implication Nobody Wants to Hear

The study’s finding that zero professionals vibe code is not a quirky data point. It is a signal about the future of the profession.

The value of a software engineer in 2026 is not measured by how much code they can generate. It is measured by how much generated code they can correctly evaluate. That evaluation capacity comes from one place: deliberate practice of the skills that require human judgment. Code review. System thinking. Constraint identification. Production reasoning.

Every hour spent accepting AI suggestions without deep evaluation is an hour where those skills atrophy. Every hour spent practicing critical evaluation is an hour where those skills compound. The developers in the UC San Diego study chose compound over atrophy. The question is whether you will too.

The data is clear. The tools are improving. And the software engineering skills that AI cannot replace are the same ones they never could: the judgment to know when something that looks right is actually wrong. Build those skills deliberately, or watch them erode by default. There is no third option.

Sources

Ready to sharpen your engineering skills?

Practice architecture decisions, code review, and system design with AI-powered exercises. 5 minutes a day builds judgment that compounds.

Start Free Trial7-day free trial. Cancel before it ends and pay nothing.